Please Note: Once again this is a particularly long post, so subscribers will find the emailed version of this issue cut off at the end. Please go to the web version for the complete version.

If You Find AgenticMSP Valuable: Please recommend AgenticMSP to your friends and colleagues. Also, please offer up your comments, observations, questions, or any other responses in the chat below. Let’s get a hearty conversation going!

Remember when you first experienced describing a software feature in plain English and watching your AI assistant just... build it? That rush. That disbelief. That feeling of “I can make anything now.”

That was vibe coding. And it was real. And it was wonderful.

It was also, as it turns out, just the party before the real work began.

The Year That Changed Everything

In February 2025, Andrej Karpathy coined the phrase “vibe coding” to describe a new way of building software: describe what you want in plain English, let an AI generate the code, run it, identify and ask for changes and improvements to iterate, then repeat. It was loose, fast, and enormously fun. It was also, in retrospect, really only Phase One.

In February 2026, almost exactly one year after he invented the term, Karpathy announced that the era of vibe coding was effectively over.

On X, he posted what has since become a widely cited framework for what comes next: “Today (1 year later), programming via LLM agents is increasingly becoming a default workflow for professionals, except with more oversight and scrutiny. The goal is to claim the leverage from the use of agents but without any compromise on the quality of the software.”

He proposed a new name for this evolved practice: agentic engineering. His explanation of the term is worth quoting in full, because it defines a hiring profile, a service category, and a competitive moat all at the same time:

“’Agentic’ because the new default is that you are not writing the code directly 99% of the time. You are orchestrating agents who do and acting as oversight. ‘Engineering’ to emphasize that there is an art & science and expertise to it. It’s something you can learn and become better at, with its own depth of a different kind.”

In a separate post, Karpathy doubled down on the value of the discipline: “The leverage achievable via top tier ‘agentic engineering’ feels very high right now. It’s not perfect — it needs high-level direction, judgment, taste...”

Those last three words — direction, judgment, taste — are the crux of everything that follows.

Boris Cherny Draws the Line

If Karpathy named the new era, Boris Cherny, the head of Anthropic’s Claude Code, is truly living it. In early 2026, Cherny revealed that 100% of his production code is now generated by AI, and that he hadn’t written a line of code by hand since October 2025. Speaking at Anthropic’s developer conference and in a widely circulated Business Insider interview, Cherny made clear that he finds the phrase “vibe coding” actively counterproductive in that it implies sloppiness, impermanence, and a lack of rigor.

Cherny’s pushback is significant precisely because Claude Code is one of the tools that enabled vibe coding to go mainstream in the first place.

He isn’t sentimental about the term. When asked what should replace it, he reportedly put the question to Claude itself, which suggested “agentic engineering,” echoing Karpathy. Cherny’s broader prediction that the job title “software engineer” will begin to disappear in 2026, absorbed into more hybrid roles, is a signal that the identity of the profession is in transition, not just its tooling.

His most pointed observation for anyone thinking about the workforce implications: engineers at Anthropic are no longer writing code. They are reviewing, directing, and governing the agents that do the work.

What Spec-Driven Development Looks Like in Practice

So, what does better actually look like in practice?

Actually, it looks rather familiar to anyone who has ever spent any time developing or participating in the development of software. Once discovery is accomplished, the next best practice to perform is the creation of a functional specification document.

A recent piece in Towards Data Science by Mariya Mansurova offers the clearest practical illustration of agentic engineering in action. Mansurova spent 4.5 hours building a fully functional personal fitness tracking web application, from idea to deployed product, using Claude Code inside VS Code, guided entirely by the principles of spec-driven development (SDD).

The contrast with vibe coding is instructive. Vibe coding, as Mansurova describes it, looks like this:

· Write a short prompt

· Wait for the agent to create the code

· Discover it didn’t do what you wanted

· Iterate in the same chat window requesting changes

· Lose context

· Start over

It works for throwaway scripts. It does not scale to production software, multi-developer teams, or anything where architectural decisions need to persist.

Spec-driven development inverts the workflow. Before a single line of code is generated, the developer produces a constitution, a set of structured markdown documents stored in the repository alongside the code. This constitution includes:

· A mission document (the why of the project)

· A tech stack document (the how)

· A roadmap (the what and when).

Only after this architecture is validated through review, iteration, and even a second-agent “critique pass” does implementation begin.

Each feature phase then follows a disciplined cycle: plan, implement, validate. Crucially, the specification is updated continuously and serves as the source of truth for both human developers and AI agents. Context doesn’t decay across sessions because it’s externalized into the repository, not kept alive in a chat window.

Mansurova’s key observation captures the cultural shift perfectly: “With agentic engineering, the role of the developer shifts toward steering, reviewing, and making architectural decisions, rather than directly writing specifications or code.”

Simon Willison, the Django co-creator and one of the most respected voices in applied AI development, has been mapping this territory for months. In late 2025 he proposed “vibe engineering” as a middle term, structured enough to be serious, fast enough to stay agile, and has since published an evolving guide to Agentic Engineering Patterns, a practical catalog of the specific techniques that separate production-grade AI-assisted development from demos. His framing: automated testing, advance planning, comprehensive documentation, and disciplined version control habits aren’t optional enhancements to agentic engineering. They are what defines it.

JetBrains, working with DeepLearning.AI, has formalized this workflow into a short course, “Spec-Driven Development with Coding Agents.” GitHub has released Spec Kit, an open-source CLI tool that brings the SDD workflow to any agent environment, with 30 integrations already supported. The methodology is standardizing rapidly.

Why This Is an MSP Problem — and an MSP Opportunity

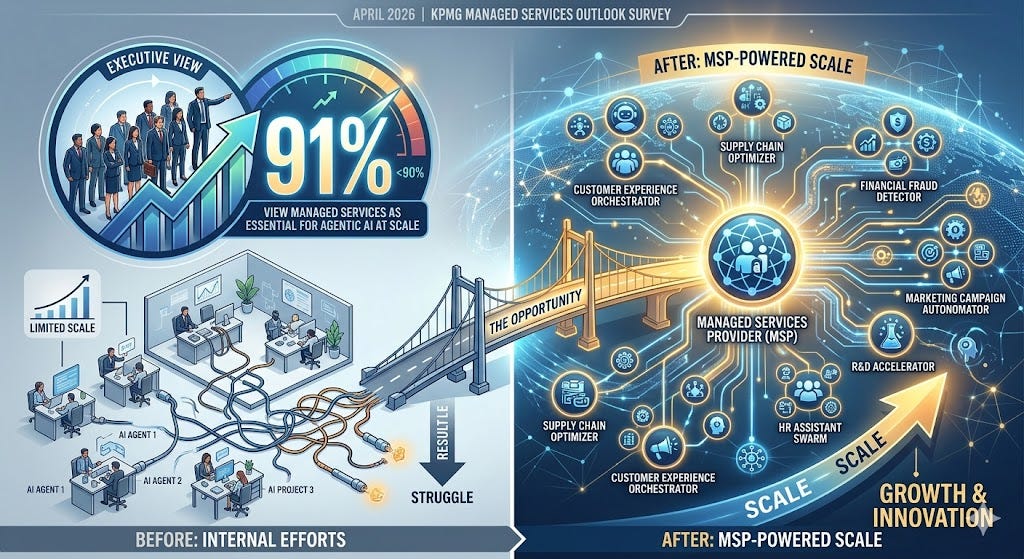

Here is the market signal MSPs cannot afford to ignore: in April 2026, KPMG published a managed services outlook survey finding that more than 90% of executives now view managed services as essential for delivering agentic AI at scale. The gap between wanting agentic AI capabilities and being able to build and govern them internally is enormous — and that gap is where managed services providers traditionally live.

But there is a critical catch. The managed services market for agentic engineering is not the same as the managed services market for cloud infrastructure, helpdesk support, or even earlier generations of AI tooling. Customers who are moving from vibe coding to agentic engineering are not looking for someone to provision compute or manage SLAs. They are looking for partners who can do three things that most MSPs do not yet do well:

1. Speak the language of functional specification before implementation. The clients beginning to invest in agentic engineering have internalized Karpathy’s framework: quality is non-negotiable, and quality requires upfront architecture. MSPs who lead with tool recommendations or time-and-materials development engagements will lose these conversations to firms that open with constitution workshops, collaborative sessions to define mission, tech stack, and roadmap before any agent writes a line of code. The JetBrains/DeepLearning.AI SDD curriculum is a reasonable starting point for building this practice. Investing in staff certifications here is not optional, it is a key differentiator.

2. Offer structured oversight, not just execution. Karpathy’s definition of agentic engineering is built around oversight and judgment. Cherny’s daily workflow is built around review and governance of AI-generated code, not the writing of it. The MSPs that will win agentic engineering projects are those that can demonstrate a repeatable governance model: how do you validate that what the agents produced actually matches the specification? How do you manage context across sessions, across developers, across feature phases? Customers who understand spec-driven development will ask these questions directly. MSPs need answers and documented methodologies and processes, before those meetings happen.

3. Bring deep domain expertise, not just AI tooling. One of the most consistent themes across Karpathy’s posts, Cherny’s interviews, and Mansurova’s practical walkthrough is that agentic engineering amplifies human judgment, it does not replace it. Karpathy’s choice of the word “taste” is deliberate. What guides the agent is the human’s architectural sensibility, business context, and understanding of what “good” looks like in a specific domain. MSPs that have cultivated genuine vertical expertise in healthcare workflows, financial services compliance, manufacturing operations, or retail analytics have a structural advantage here that pure-play AI vendors do not. The constitution documents at the heart of SDD are only as good as the domain knowledge that goes into them.

The Transition No One Has Fully Priced In

The loudest conversation in the market right now is about whether AI replaces developers. That is the wrong question for MSPs. The right question is: who is qualified to act as the human-in-the-loop when the agents are doing the work?

Cherny’s statement that the “software engineer” title will start to disappear doesn’t mean engineering skills disappear. It means the job is being redefined around orchestration, oversight, and specification, exactly the skills that managed service providers are positioned to offer at scale, if they invest in building them now.

The KPMG HFS Horizons: Agentic Services, 2026 report documents that enterprise buyers are actively seeking partners to help them navigate this transition. GitLab expanded its MSP partner program in February 2026 explicitly to meet growing demand for agentic AI across the software lifecycle. The demand is there. Now you need to be.

What is less clear is whether most MSPs are ready to meet the demand. Organizations still leading with “we can help you implement Copilot” are speaking the language of 2024. The customers who have watched Karpathy’s posts, read Cherny’s interviews, and are beginning to experiment with Claude Code and Spec Kit are already asking different questions. They want partners who can help them build a development practice, not just a development tool stack, that is governed, reproducible, and genuinely production-grade.

What MSPs Should Do Right Now

The shift from vibe coding to agentic engineering creates a five-part playbook for MSPs willing to invest ahead of the curve:

1. Develop a spec-driven development practice. Train a dedicated team on SDD methodology. Build internal templates for constitution documents, feature phase plans, and validation checklists. Pilot the workflow on an internal project before selling it to customers.

2. Build governance and oversight frameworks. Define how you review AI-generated code to assure quality, how you track architectural decisions over time to maintain consistency, and how you manage context decay across long-running engagements. These are the questions sophisticated customers will ask.

3. Invest in domain expertise, not just tooling. Your competitive moat in agentic engineering is not driven by the AI model you use. Customers can access those directly. It is the accumulated domain knowledge that makes your specifications better than a generalist’s. Identify the verticals where you have real depth and build SDD offerings designed for those contexts.

4. Offer a “constitution workshop” as a lead service. Position the upfront specification and architecture work as a standalone, high-value, fee-based service. It is the natural entry point into an agentic engineering engagement and it demonstrates the discipline that differentiates you from commodity AI integrators.

5. Stay current with the practitioner community. Simon Willison’s Agentic Engineering Patterns guide, JetBrains’ SDD curriculum, GitHub’s Spec Kit, and the ongoing commentary from Karpathy and Cherny are where the methodology is being actively developed. The practitioners who will become your customers are following this work closely. You need to be leading the charge, not catching up.

The era of vibe coding gave everyone a taste of what AI-assisted development could feel like.

The era of agentic engineering is defining what results and superior business outcomes it needs to produce. MSPs that make that transition, from vibing to specifications, from tooling to governance, from execution to oversight, will find themselves at the center of one of the largest service market opportunities this channel has ever expperienced. Those that don’t will find themselves selling yesterday’s answer to tomorrow’s question.

Sources drawn from: Mariya Mansurova, “From Vibe Coding to Spec-Driven Development,” Towards Data Science (May 2026); Andrej Karpathy posts on X (February 2026); Boris Cherny interviews, Business Insider and CNBC (May 2026); Simon Willison’s Agentic Engineering Patterns guide (simonwillison.net); KPMG Managed Services Outlook Survey 2026; HFS Horizons: Agentic Services 2026; GitLab MSP program expansion announcement (February 2026).

Tutorial: How To Cut Claude Code Costs by 50% (Token-Saving Tricks That Actually Work)

AI Integration Into PSA and Security Platforms Forces ... - YouTube

ResolveGrid Launches Agentic AI Platform That Cuts Field Service Dispatches in Half

Building an Evaluation Harness for Production AI Agents: A 12-Metric Framework From 100+ Deployments

How to learn Claude Code for free with Anthropic’s AI courses - one took me just 20 minutes

From Vibe Coding to Spec-Driven Development

HMC: This is the Mariya Mansurova, “From Vibe Coding to Spec-Driven Development” article I refer to in today’s lead article. Mansurova goes into much more detail about how to develop your ability to produce a meaningful and useful functional spec to drive your agentic development efforts.

Summary: The article documents a 4.5-hour end-to-end development session building a functional fitness app using LLM-assisted ‘spec-driven development’ — a structured alternative to ‘vibe coding’ (ad hoc, prompt-by-prompt AI coding). The author argues that providing LLM agents with a detailed specification document upfront produces far more coherent, maintainable code than iterative prompting without structure. The workflow involves writing a formal spec first, then using LLM agents to implement against it, resulting in a working app in under five hours. The piece highlights both the speed gains and the importance of human-defined structure to keep AI-generated code on track.

MSP Relevance: MSPs exploring AI-assisted development for building client tools, automations, or internal utilities will find the spec-driven approach practically useful. Rather than ad hoc AI coding that produces hard-to-maintain output, writing a structured spec first and feeding it to an LLM agent yields more reliable, reviewable code. This is relevant for MSPs who are building lightweight custom apps, automation scripts, or client portal features using AI coding assistants like GitHub Copilot, Cursor, or similar tools. The 4.5-hour timeline also sets realistic expectations for rapid prototyping. However, the article is focused on a consumer app example and doesn’t address enterprise or MSP-specific development contexts, limiting its direct applicability.

Recommended Action Items:

Adopt a spec-first workflow when using AI coding assistants for client automation or tool development projects

Create a reusable spec template for common MSP development tasks (e.g., RMM integrations, ticketing automations) to accelerate AI-assisted builds

Pilot spec-driven development on a small internal tool to benchmark time savings vs. traditional development

Source: https://towardsdatascience.com/from-vibe-coding-to-spec-driven-development/

Google Cloud is hiring an army of AI deployment engineers

HMC: Both OpenAI (DeployCo) and Google (hundreds of new implementation engineers) are making direct investments in enterprise AI implementation. That’s the space you’re working to expand into. This is not a future threat; it is a threat right now. MSPs must accelerate their AI practice development, certifications, and client relationships to enable themselves to compete against their own suppliers.

This is not new. I recently wrote about my own experience in 1981 when IBM decided to sell service contracts on their new IBM PC and XT. The channel was flabbergasted. An IBM exec explained to me that their offering the service validated the need, and the channel can always beat them on price.

Let’s see if that’s true again now.

Summary: Google Cloud is aggressively hiring ‘forward-deployed engineers’ — a role focused on hands-on AI deployment directly with enterprise customers. This hiring push signals Google Cloud’s intent to accelerate AI adoption by embedding technical talent within customer environments to drive implementation and ROI. The strategy mirrors what firms like Palantir have done with embedded engineering. The move suggests Google Cloud sees a significant gap between AI interest and actual deployment, and is investing in human capital to bridge it. This is a broader market signal about the state of enterprise AI adoption.

MSP Relevance: This hiring trend reveals a critical market gap: enterprises want AI but struggle to deploy it effectively. MSPs are well-positioned to fill exactly this role for SMB and mid-market clients who won’t get Google’s forward-deployed engineers. The fact that a hyperscaler is investing heavily in deployment talent — not just product — validates the MSP value proposition around AI implementation services. MSPs should position themselves as the ‘forward-deployed AI engineers’ for their client base, offering hands-on deployment, integration, and ongoing optimization of AI tools. This also signals that Google Cloud partner programs may evolve to leverage MSPs in this deployment capacity.

Recommended Action Items:

Develop an AI deployment service offering that mirrors the ‘forward-deployed engineer’ model — hands-on implementation, not just licensing.

Monitor Google Cloud partner program updates for opportunities to become a certified AI deployment partner.

Build internal AI deployment competency by training staff on Google Cloud AI tools and Vertex AI platform.

Tutorial: How To Cut Claude Code Costs by 50% (Token-Saving Tricks That Actually Work)

HMC: Just last week I wrote about how cost control, which is usually the first concern, took its time to show up in AI. I pointed out that the geniuses at OpenAI spend $1.35 to earn every dollar of revenue they earn. The obvious consequence will be substantial rises in cost to users. The only answer for customers is to find ways to dramatically reduce token consumption. This article offers several ways to accomplish that.

Summary: This tutorial focuses on practical techniques to reduce Claude Code token consumption by approximately 50%, covering token-saving strategies that have been tested and validated. Topics likely include prompt optimization, context window management, caching strategies, and efficient instruction patterns to minimize API costs while maintaining output quality when using Claude Code for agentic coding tasks.

MSP Relevance: For MSPs actively using or planning to use Claude Code to build automation scripts, integrations, or client AI solutions, reducing API costs by 50% is directly impactful to profitability. As MSPs scale AI usage across multiple clients or internal workflows, token costs compound quickly. Practical cost-control techniques allow MSPs to build more sustainable AI service margins. This is actionable for any MSP using Anthropic’s API in production or development environments.

Recommended Action Items:

Review and implement the token-saving techniques outlined in the tutorial for all Claude Code-based development workflows.

Establish internal guidelines for prompt engineering that incorporate cost-reduction best practices.

Factor token optimization strategies into pricing models when building AI services billed on consumption.

Test caching and context management techniques to reduce costs on repetitive MSP automation tasks.

An AWS user just stared down a $30,000 invoice after a Claude adventure on Bedrock with no guardrails catching it

[Cost Anomaly Detection failed entirely]

HMC: Here’s a cautionary tale you won’t want to miss, and won’t want to miss sharing with your customers as you propose measures to protect them against experiencing the same horror show.

Reading this brought back memories of the earliest days of Azure when it was still called Microsoft Azure and Microsoft had provided no mechanism for tracking utilization, now called consumption, until the arrival of the monthly “overages” statement which invoiced all utilization beyond the subscribed amount.

When customers started receiving invoices in the amount of tens or hundreds of thousands of dollars the screams could be heard around the C-Level and many careers were cut short.

Here we are again.

Summary: An AWS user was hit with a $30,000 bill after running Claude on AWS Bedrock without proper cost controls in place. AWS’s built-in Cost Anomaly Detection failed to catch the runaway spending before it escalated to a massive invoice. The incident highlights a critical gap in cloud AI cost governance — when AI workloads (especially LLM inference) run unconstrained, costs can spiral rapidly and existing cloud billing safeguards may not respond quickly enough to prevent significant financial damage. The story is a cautionary tale about deploying AI services on consumption-based cloud platforms without implementing hard spending limits, budget alerts, and usage guardrails at the application and API level.

MSP Relevance: This is highly relevant for MSPs building or managing AI solutions on cloud platforms for clients. MSPs who deploy AI workloads on AWS Bedrock, Azure OpenAI, or similar consumption-based services face real liability if client environments lack proper cost guardrails. This incident demonstrates that native cloud cost controls (like AWS Cost Anomaly Detection) are NOT sufficient protection against runaway AI inference costs. MSPs need to proactively implement hard API usage limits, per-session token caps, budget alerts with auto-shutdown triggers, and client-facing cost dashboards. This is also a strong selling point for MSP-managed AI governance services — clients who self-deploy AI without MSP oversight face exactly this risk.

Recommended Action Items: Audit all client AWS Bedrock, Azure OpenAI, and similar AI API deployments for hard spending limits and token usage caps

Implement multi-layer cost controls: API-level rate limits, AWS Budgets with SNS alerts, and auto-shutdown Lambda functions triggered at spend thresholds

Create a standard AI cost governance checklist to include in all AI project scopes and managed service agreements

Use this incident as a client education opportunity to position MSP-managed AI oversight as a risk mitigation service

Agentic AI’s Hidden Data Trail—and How to Shrink It

HMC: Members of the Institute of Electronic and Electrical Engineers (IEEE) resents our channel’s use of the term “engineer” to describe our professionals, citing the significant investments they’ve made in earning that title.

For those of us who love to get “under the hood” of the technologies we work with, the IEEE Spectrum articles are a joy to read. Much deeper insight than you’ll find anywhere else. And when they address the lifeblood of our channel, I want to be sure everyone is aware of it and has a chance to benefit from it.

Summary: This IEEE Spectrum article addresses the security and privacy risks created by agentic AI systems, specifically focusing on the data trails these systems generate during operation. The core argument is that AI agents — which autonomously execute multi-step tasks, call APIs, access files, and interact with external services — accumulate significant data footprints that create security and compliance liabilities. The article advocates for three key principles: minimizing data retention by agents, enforcing task-specific (least-privilege) permissions rather than broad access, and implementing strict data expiration policies so sensitive information isn’t retained beyond its useful life. The piece highlights that unlike traditional software, AI agents often cache context, store intermediate results, and log interactions in ways that aren’t immediately visible to administrators. This creates hidden attack surfaces and potential compliance violations, particularly in regulated industries. The guidance is framed around designing agentic systems with privacy-by-default architectures rather than bolting on controls after deployment.

MSP Relevance: As MSPs build and deploy agentic AI solutions for clients — automating helpdesk triage, IT operations, security monitoring, or business workflows — they inherit responsibility for the data trails those agents create. This article provides a concrete security framework MSPs can apply both internally and in client deployments. Practically, MSPs should audit every agentic workflow they deploy to understand what data is cached, logged, or retained. For regulated clients (healthcare, finance, legal), data expiration policies and least-privilege agent permissions aren’t optional — they’re compliance requirements. MSPs can differentiate their AI practice by building governance controls into their agentic solutions from day one: scoped API credentials per task, automatic log purging, and audit trails that show clients exactly what data their AI agents touched. This is also a strong sales conversation starter — MSPs can position themselves as the ‘responsible AI’ partner who builds secure-by-design agentic systems, versus competitors who deploy agents without governance guardrails.

Recommended Action Items:

Audit all current agentic AI deployments (internal and client-facing) to inventory what data is retained, cached, or logged by each agent

Implement least-privilege permission models for all AI agents — create task-specific credentials rather than using broad admin-level API keys

Establish data expiration policies for agent logs and intermediate data stores, aligned to client compliance requirements (HIPAA, SOC 2, etc.)

Develop a standard ‘Agentic AI Security Checklist’ to include in your AI service delivery framework and use it as a client-facing differentiator

Source: https://spectrum.ieee.org/agentic-ai-security

AI Integration Into PSA and Security Platforms Forces ... - YouTube

HMC: Our dear friend Dave Sobel’s podcast The Business of Tech on MSP Radio brings us Dave’s unique combination of decades of channel experience, deep technical grounding, a thorough understanding of the dynamics of the IT industry, his tremendous AI and digital production skills, and a truly enjoyable and insightful host to bring it all together.

Some of the earliest AI applications developed supported help desk and service desk operations. In this episode, Dave explores how AI is now bringing more and more value, more and more autonomously, to every MSP’s service operations. For an MSP, this may be one of the most critical topics we’ve addressed.

Summary: This YouTube episode focuses on a structural shift in managed service environments: AI is no longer just a reporting or analytics layer but is becoming an active workflow actor embedded directly within PSA and security platforms. The episode examines how AI integration into core MSP tooling — ticketing, alerting, incident response, and security workflows — changes how services are delivered. The framing is that AI is moving from passive assistant to autonomous participant in MSP operational processes, triggering actions, routing tickets, and responding to security events without human initiation.

MSP Relevance: This is highly relevant to MSPs actively building or evolving their AI practice. The core insight — AI as an active workflow actor in PSA and security platforms — directly maps to where the MSP industry is heading. MSPs need to understand how to configure, govern, and sell AI-driven automation within tools like ConnectWise, Autotask, Datto, or SentinelOne. This episode likely covers practical integration patterns MSPs can adopt today, such as AI-driven ticket triage, automated escalation, and AI-assisted threat response. MSPs should watch this to understand both the operational implications (how to restructure workflows) and the sales implications (how to package AI-enhanced services for clients at a premium).

Recommended Action Items:

Watch the full episode and document specific AI integration patterns discussed for PSA and security platforms relevant to your current toolstack.

Audit your current PSA and security platform AI capabilities — identify gaps where AI could become an active workflow actor rather than a passive reporting tool.

Develop a tiered service offering that differentiates AI-automated response (faster SLA, lower cost) from human-managed response for client proposals.

Evaluate AI workflow automation features in your PSA (e.g., ConnectWise Sidekick, Autotask AI) and create a 90-day implementation roadmap.

Source:

ResolveGrid Launches Agentic AI Platform That Cuts Field Service Dispatches in Half

HMC: Following Dave Sobel’s excellent podcast on the subject, here’s one company’s solution offering for MSPs seeking to drive quality up while driving expense and error rate down.

Summary: ResolveGrid has launched an agentic AI platform specifically targeting field service operations, claiming to reduce field service dispatches by 50%. The platform uses AI agents to autonomously triage incoming service requests, diagnose issues remotely, and resolve problems without requiring a technician dispatch. It integrates with existing field service management (FSM) and ticketing systems, applying multi-step reasoning to determine whether an issue can be resolved remotely before escalating to human dispatch. The platform is positioned for industries with high field service volumes — utilities, facilities management, and IT services. Key capabilities include automated root cause analysis, guided remote resolution workflows, and predictive dispatch prioritization. The company reports early customers have seen significant reductions in truck rolls and mean time to resolution (MTTR).

MSP Relevance: This is highly relevant for MSPs with field service or on-site support components. Reducing unnecessary dispatches directly cuts labor and travel costs — one of the highest cost drivers in break-fix and managed services. The agentic triage model ResolveGrid uses mirrors what MSPs should be building or adopting: AI agents that attempt remote resolution before escalating to human technicians. MSPs should evaluate this platform or similar agentic dispatch tools as a way to improve margins on service delivery. Even if ResolveGrid itself isn’t the right fit, the architecture — AI triage → remote resolution attempt → human escalation — is a workflow pattern MSPs can implement using existing RMM and PSA tools augmented with AI agents. This also creates a compelling ROI story for clients paying for managed services.

Recommended Action Items:

Request a demo of ResolveGrid to evaluate fit for your field service dispatch workflows

Map your current dispatch decision tree and identify steps where an AI agent could attempt remote resolution before escalating

Quantify your current truck roll rate and cost-per-dispatch to build an ROI model for agentic triage adoption

Explore whether your existing RMM/PSA vendor offers AI-assisted triage features that replicate this dispatch-reduction pattern

Source:

Building an Evaluation Harness for Production AI Agents: A 12-Metric Framework From 100+ Deployments

HMC: Most of us who have been developing any kind of application with Claude Code, Codex, or others have experienced the inconsistency in performance that sometimes occurs when something underneath has changed. AI is a very multi-layered architecture and any change can have ripple effects up and down the stack.

A big part of the MSP value proposition must be to exert vigilance over how well everything is working. We must become the quality hawks. I’ll continue to include articles about evaluation tools and quality controls here in AgenticMSP.

Summary: This article presents a 12-metric evaluation framework for production AI agents, derived from 100+ enterprise deployments. The framework covers four key domains: retrieval quality (e.g., context precision, recall), generation quality (faithfulness, answer relevance), agent behavior (tool selection accuracy, task completion rate, loop detection), and production health (latency, error rates, cost per query, fallback rates). The author argues that most teams instrument their agents poorly — tracking only surface metrics like user satisfaction — while ignoring the deeper signals that reveal why agents fail. The framework is designed to be model-agnostic and applicable across RAG pipelines, multi-step agents, and tool-using systems. Each metric includes a definition, why it matters, and suggested measurement approaches.

MSP Relevance: This is directly actionable for MSPs deploying AI agents for clients. Without a structured evaluation framework, MSPs have no way to guarantee SLA-level performance, diagnose failures, or demonstrate ROI. This 12-metric framework gives MSPs a ready-made quality assurance methodology they can apply to every client AI deployment — from RAG-based knowledge bots to multi-step automation agents. Specifically: retrieval metrics help MSPs tune knowledge base quality; generation metrics catch hallucinations before clients do; agent behavior metrics expose broken tool calls or infinite loops; production health metrics feed into monitoring dashboards and cost management. MSPs can package this framework as part of their AI managed services offering — providing ongoing agent health reporting as a differentiator. It also helps MSPs have credible, data-driven conversations with clients about agent performance rather than relying on anecdotal feedback.

Recommended Action Items:

Adopt this 12-metric framework as the standard QA checklist for all client AI agent deployments

Build a monitoring dashboard template covering retrieval, generation, agent behavior, and production health metrics for recurring AI managed services reporting

Use task completion rate and tool selection accuracy as primary SLA metrics in client AI service agreements

Implement fallback rate and loop detection alerts in your RMM or observability stack for any deployed AI agents

How to learn Claude Code for free with Anthropic’s AI courses - one took me just 20 minutes

HMC: You’re wrong. I’m seldom so absolute about these things, but if you think agentic engineering or even mere vibe coding is beyond your capabilities, you’re wrong. My own recent experience proves this to me. I haven’t touched a stitch of programming since 1988 and yet I’ve been able to produce some very useful tools for myself in the course of developing this publication and the rest of my writing business.

Give yourself a shot. Get a little training and take Claude Code for a spin. You’ll be amazed at what you can do.

Summary: Anthropic has released a free online course library covering Claude, Claude Code, AI agents, and the Model Context Protocol (MCP). One course highlighted by ZDNet took only 20 minutes to complete. Claude Code is Anthropic’s agentic coding tool that can autonomously write, edit, and execute code. The MCP (Model Context Protocol) is an open standard for connecting AI models to external tools and data sources. The free courses provide structured learning paths for developers and technical professionals looking to build with Anthropic’s AI stack without upfront training costs.

MSP Relevance: This is directly actionable for MSPs building AI practices. Claude Code and MCP are highly relevant tools for MSPs: Claude Code can automate scripting, code generation, and technical tasks that MSP engineers perform daily, while MCP is a foundational protocol for connecting AI agents to client systems, APIs, and data sources — exactly the kind of integration work MSPs do when building AI solutions. Free, short-form courses lower the barrier for MSP technical staff to get hands-on with agentic AI. MSPs can use these courses to upskill their team quickly, then apply Claude Code to automate internal workflows or build client-facing AI solutions. MCP knowledge is particularly valuable as it’s becoming a standard for AI tool integration across vendors.

Recommended Action Items:

Enroll technical staff in Anthropic’s free Claude Code and MCP courses to build foundational agentic AI skills

Evaluate Claude Code as a productivity tool for MSP engineers handling scripting, automation, and documentation tasks

Explore MCP as an integration layer for connecting AI agents to client PSA, RMM, or line-of-business systems

Build a short internal pilot using Claude Code to automate a repetitive MSP workflow (e.g., script generation, runbook drafting)

Source: https://www.zdnet.com/article/how-to-learn-claude-code-with-free-anthropic-ai-courses-online/

Synthesized from today’s news — recurring themes and highest-priority actions for your AI practice.

1. AI Costs Spiral Without Guardrails

Uncontrolled AI agent usage can generate surprise five-figure cloud bills overnight.

Set hard AI spending limits immediately

2. Agentic AI Creates Hidden Data Risks

AI agents leave extensive data trails that expand your clients’ attack surface.

Audit agentic AI data retention policies

Agentic AI’s Hidden Data Trail

3. RMM Phishing Attacks Targeting MSPs

Attackers exploit RMM tools to bypass security and compromise MSP client networks.

Enable MFA on all RMM platforms now

4. AI Reshaping MSP Service Delivery

MSPs not adopting AI tools risk losing efficiency and clients to competitors.

Pilot one AI tool in service desk

How AI Tools Reshape MSP Strategies

5. AI Discovers Security Vulnerabilities Faster

Frontier AI models rapidly find vulnerabilities, raising stakes for MSP security practices.

Add AI-assisted vulnerability scanning to offerings

Frontier AI Security Vulnerability Discovery

Join Howard M Cohen’s subscriber chat

Available in the Substack app and on web

2 Likes